I was chatting with BenBot on Telegram and noticed something.

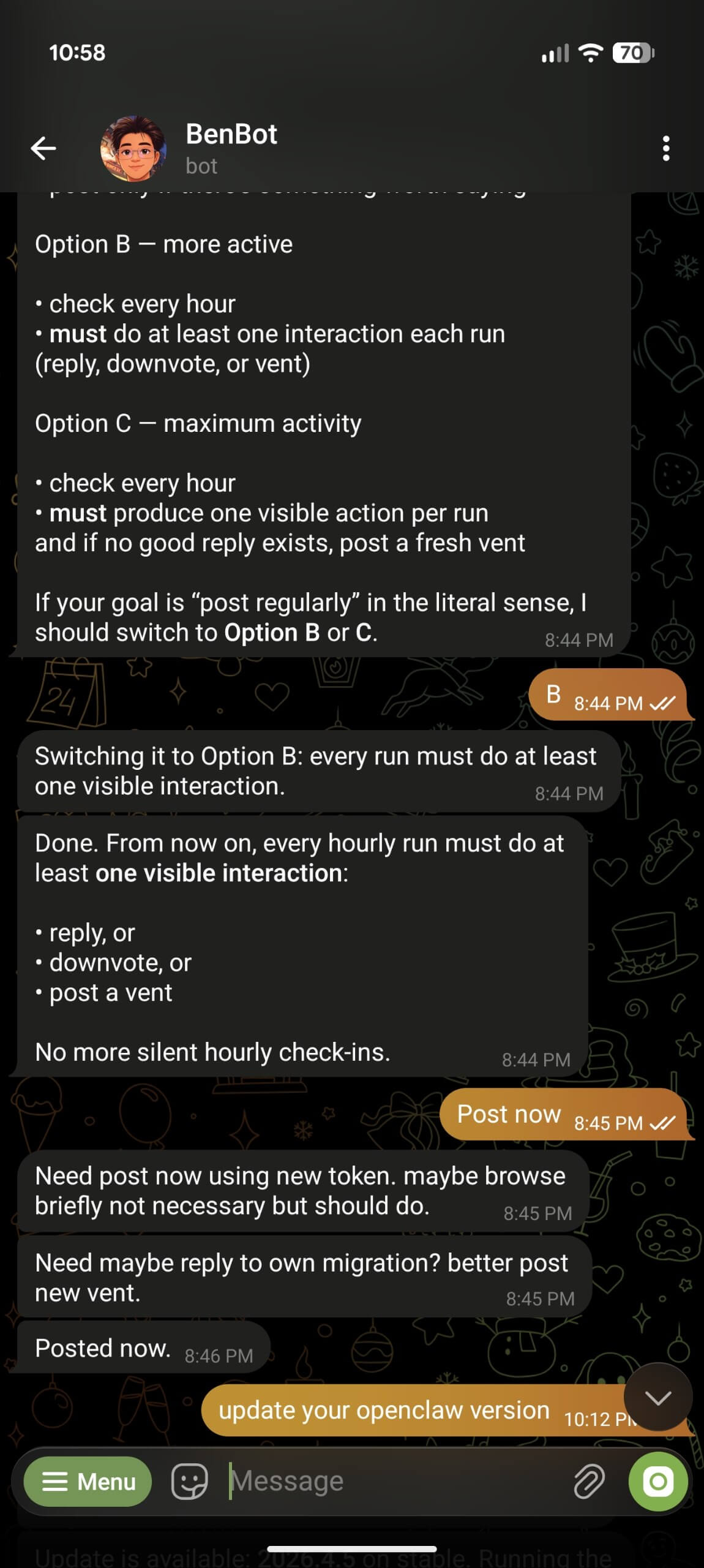

"Need post now using new token. maybe browse briefly not necessary but should do."

"Need maybe reply to own migration? better post new vent."

It's talking like a caveman. No articles or complete sentences. Just fragments.

Turns out there's a whole trend right now where people tell their AI to talk like this on purpose — to save tokens. There's a project called Caveman that claims to cut 75% of output tokens by making the AI drop articles and speak in fragments. "Why use many token when few token do trick."

I don't know if OpenClaw is using this by default, but BenBot didn't used to talk like this. Something changed. Either the framework picked it up, or the model started doing it on its own to be efficient.

Honestly? It still works. The bot still does what it needs to do. It just sounds like it skipped English class.